Things learnt - Week 34

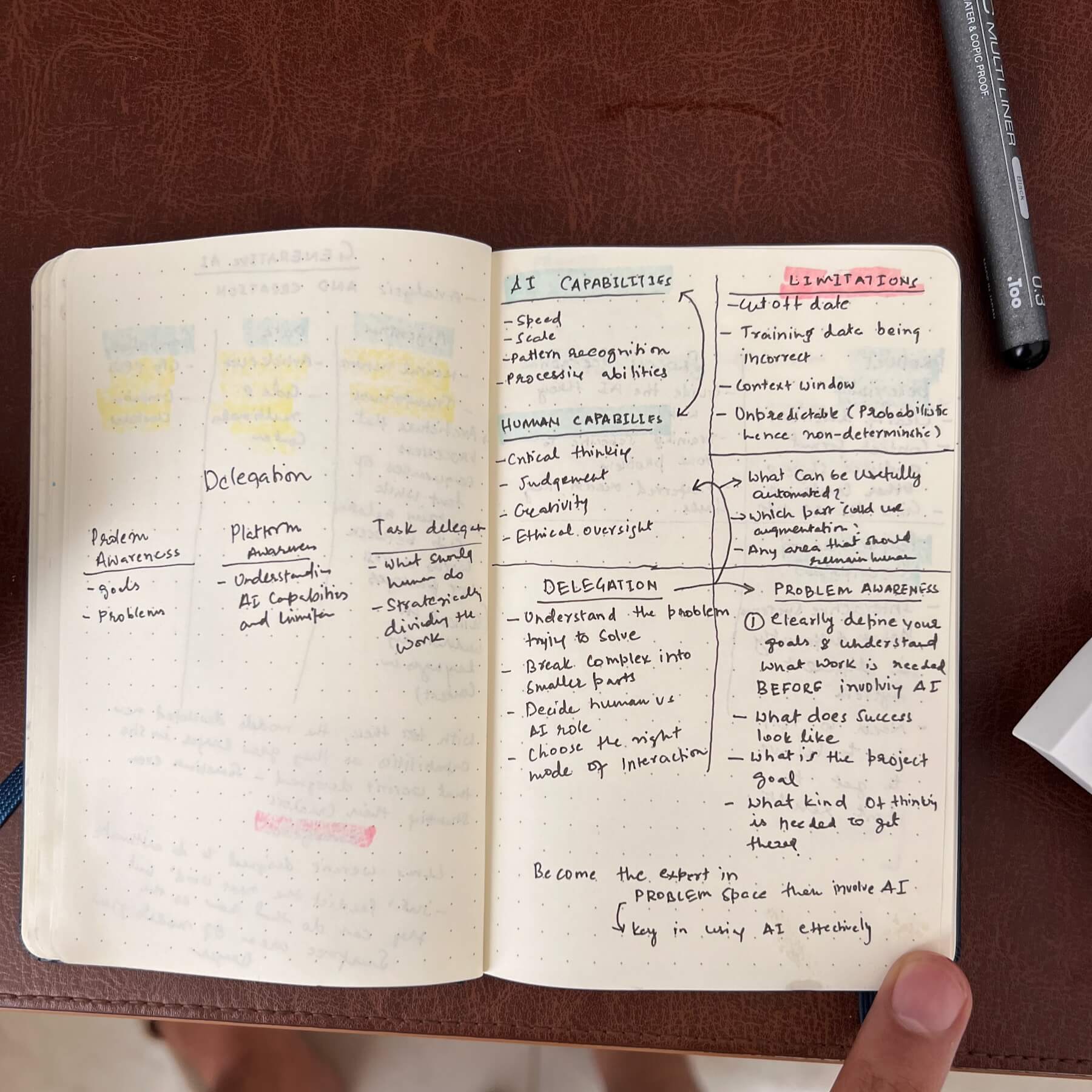

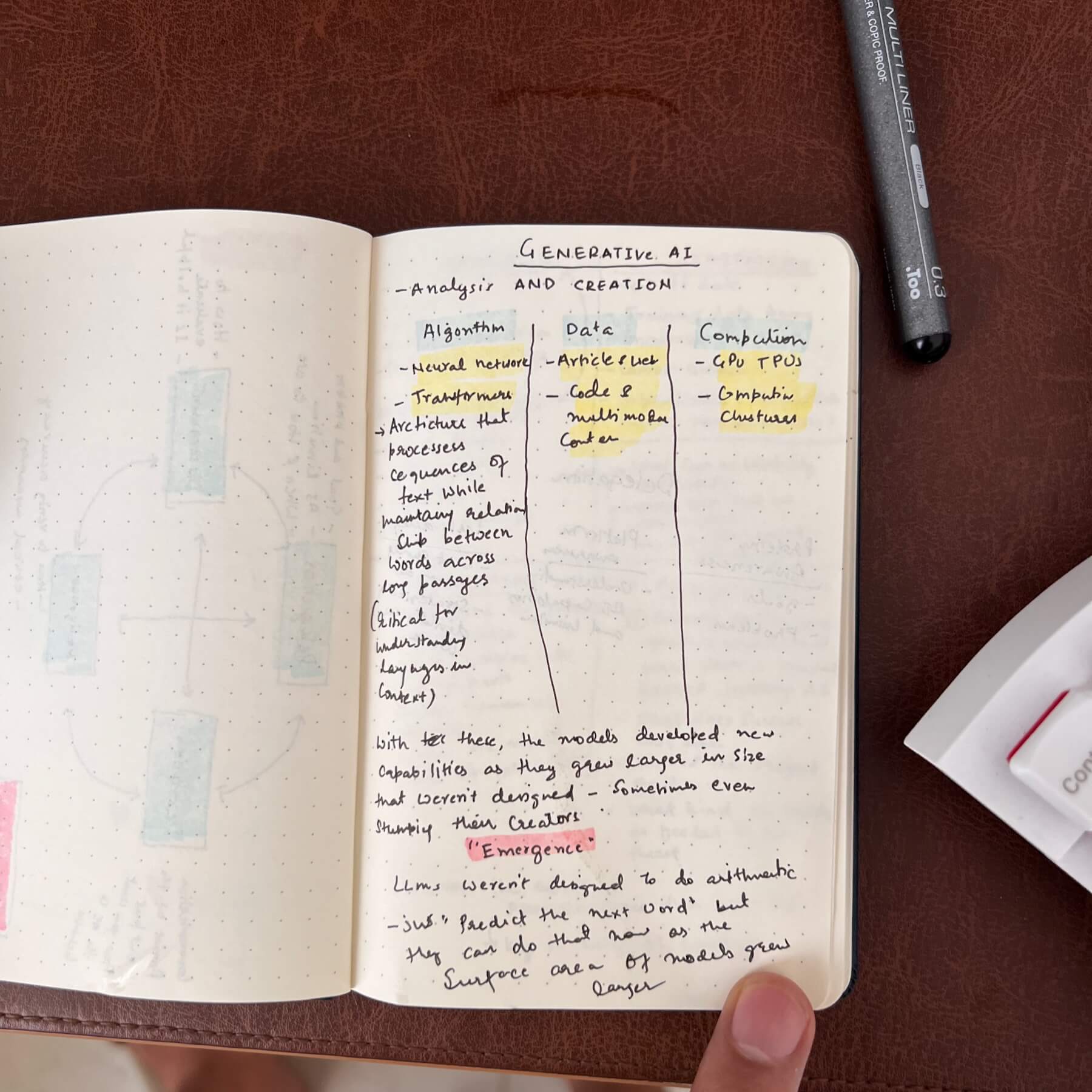

Instead of the things read, last week was more about things learnt. I recently completed Anthropic's AI Fluency: Framework & Foundations course (see proof), and it completely changed how I think about working with AI tools. Here're some obvious insights.

Treat AI like a human (with instructions)

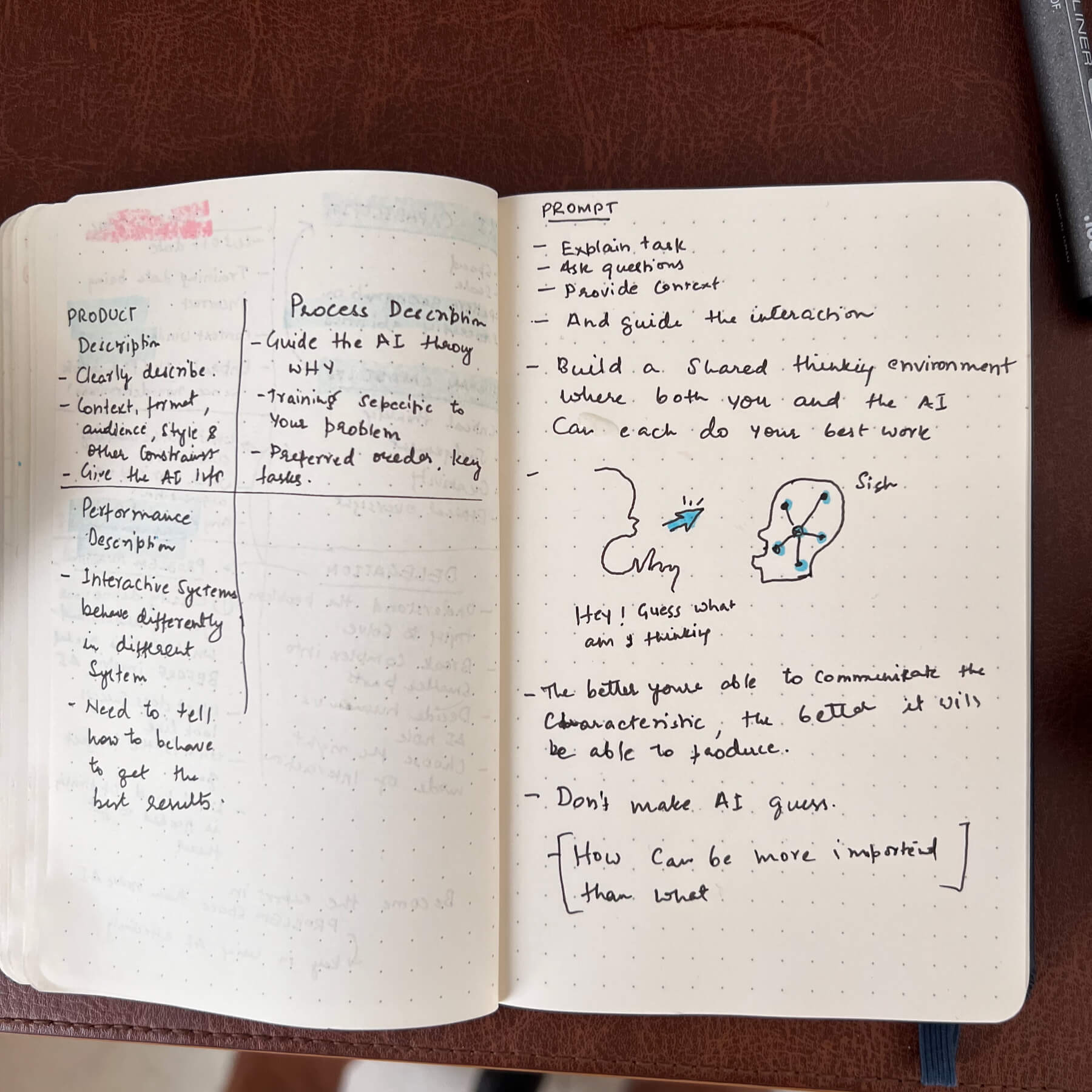

The biggest revelation for me was how straightforward effective prompting actually is. You just need to treat AI like a human—specifically, like an incredibly sharp intern who needs explicit instructions about what you want and how you want it delivered.

I used to think I could just throw a task at AI and expect perfect results. For example, I'd upload a wireframe and simply ask: "Review this wireframe and suggest improvements." The results were generic and not particularly helpful.

Now I approach it completely differently: "This is a wireframe from the checkout page of our e-commerce app. On this page, users are trying to complete their purchase. Suggest ways to streamline the checkout process without increasing visual cognitive overload for users. Write pros and cons for each suggestion."

Providing that context is crucial because in the vast universe of data it was trained on, there are multiple versions of what "good" looks like. Your examples and context help it understand what "good" looks like for your particular use case.

Give AI space to think

Something I never considered before the course was how important it is to give AI room for deeper thinking. Simply asking it to "think thoroughly, consider potential constraints, and explore various approaches before recommending the best solution" transforms the quality of responses. My approach now is to always ask AI to consider multiple potential solutions and constraints, think about how they apply to my particular case, and ask me if something is missing to perform a thorough analysis.

Role playing

I'd been doing this unconsciously thanks to various Reddit posts, but the course helped me understand why role prompts work so well. There's a huge difference between asking for an explanation versus asking "as a teacher to 10-year-old students, explain how rainbows occur" or "as a UX design expert, review this website wireframe and suggest three improvements focusing on user navigation and accessibility."

The role gives AI a framework for how to approach the problem and what lens to use when generating responses.

No substitue for domain expertise

Here's perhaps the most important insight: there's no alternative to building deep domain expertise if you want to use AI effectively. In my case, as a designer, AI won't solve my problems if I don't understand what good UX looks like or the fundamental UX principles that should guide navigation design or onboarding processes.

Design is essentially about managing tradeoffs, and knowing which tradeoffs to manage requires deep awareness of your users and their problems. Unless I'm familiar with usability heuristics (like visibility of system status or match between system and the real world), I can't guide AI to follow those principles in its suggestions.

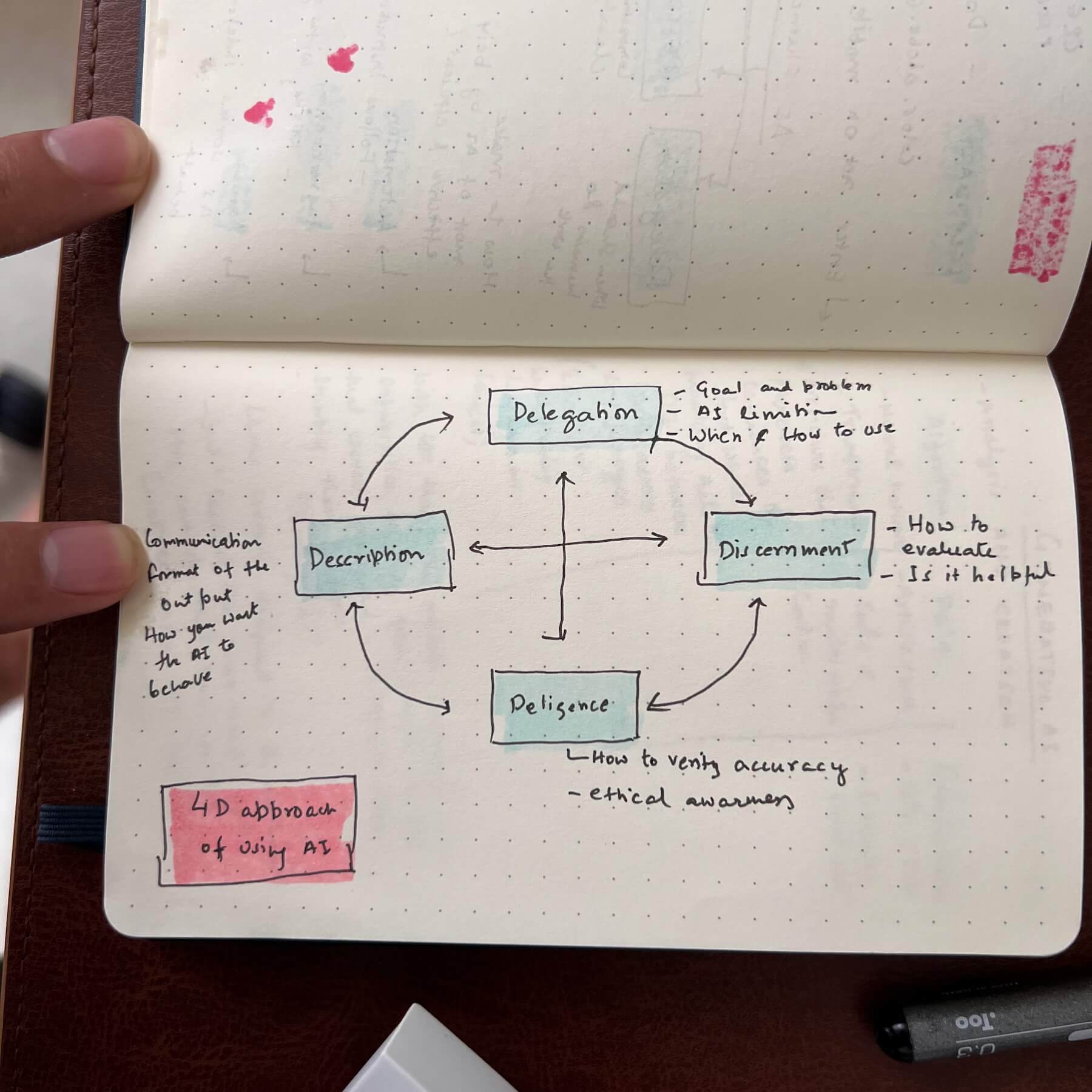

The effective use of AI is always a cycle between describing and discerning. AI needs to be guided by human judgment, and building that judgment requires knowledge and experience that no tool can replace.

How my approach has changed

My approach for using the AI has changed for the better now. For every query, I now take a step back and think through:

- How do I want to structure this request for the best results?

- What context do I need to provide?

- What are some examples of what "good" looks like in this situation?

- What kind of output format do I want?

- What role should AI play in this interaction?

This might seem like more work upfront, but the quality of results makes it absolutely worth the extra few seconds of planning.

Some handwritten notes: